There aren't a lot of them around,īut Hemmings (Motor News) ususally has ten or so listed. Odd looking with the encased front bumper. > have on this funky looking car, please e-mail.īricklins were manufactured in the 70s with engines from Ford. > of production, where this car is made, history, or whatever info you

If anyone can tellme a model name, engine specs, years > the front bumper was separate from the rest of the body. It was a 2-door sports car, looked to be from the late 60s/ > I was wondering if anyone out there could enlighten me on this car I saw The second most similar document is a reply that quotes the original message hence has many common words: > print twenty.dataĪrticle-I.D.: reed.1993Apr21.032905.29286 brought to you by your neighborhood Lerxst. Have on this funky looking car, please e-mail. Of production, where this car is made, history, or whatever info you The front bumper was separate from the rest of the body.

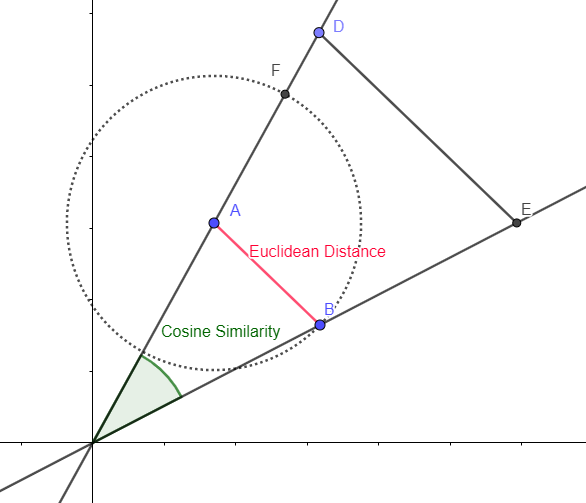

It was a 2-door sports car, looked to be from the late 60s/Įarly 70s. I was wondering if anyone out there could enlighten me on this car I saw Organization: University of Maryland, College Park The first result is a sanity check: we find the query document as the most similar document with a cosine similarity score of 1 which has the following text: > print twenty.data Hence to find the top 5 related documents, we can use argsort and some negative array slicing (most related documents have highest cosine similarity values, hence at the end of the sorted indices array): > related_docs_indices = cosine_similarities.argsort() > cosine_similarities = linear_kernel(tfidf, tfidf).flatten()Īrray([ 1. In this case we need a dot product that is also known as the linear kernel: > from import linear_kernel kernels in machine learning parlance) that work for both dense and sparse representations of vector collections. Scikit-learn already provides pairwise metrics (a.k.a. With 89 stored elements in Compressed Sparse Row format> To get the first vector you need to slice the matrix row-wise to get a submatrix with a single row: > tfidf The scipy sparse matrix API is a bit weird (not as flexible as dense N-dimensional numpy arrays). Row-normalised have a magnitude of 1 and so the Linear Kernel is sufficient to calculate the similarity values. the first in the dataset) and all of the others you just need to compute the dot products of the first vector with all of the others as the tfidf vectors are already row-normalized.Īs explained by Chris Clark in comments and here Cosine Similarity does not take into account the magnitude of the vectors. Now to find the cosine distances of one document (e.g. With 1787553 stored elements in Compressed Sparse Row format> > tfidf = TfidfVectorizer().fit_transform(twenty.data) > from sklearn.datasets import fetch_20newsgroups

I am not sure how to use this output in order to calculate cosine similarity, I know how to implement cosine similarity with respect to two vectors of similar length but here I am not sure how to identify the two vectors.įirst off, if you want to extract count features and apply TF-IDF normalization and row-wise euclidean normalization you can do it in one operation with TfidfVectorizer: > from sklearn.feature_extraction.text import TfidfVectorizer Tfidf = ansform(testVectorizerArray)Īs a result of the above code I have the following matrix Fit Vectorizer to train set Print ansform(trainVectorizerArray).toarray() Print 'Transform Vectorizer to test set', testVectorizerArray Print 'Fit Vectorizer to train set', trainVectorizerArray TestVectorizerArray = ansform(test_set).toarray() TrainVectorizerArray = vectorizer.fit_transform(train_set).toarray() Vectorizer = CountVectorizer(stop_words = stopWords) I followed the examples in the article with the help of the following link from stackoverflow, included is the code mentioned in the above link (just so as to make life easier) from sklearn.feature_extraction.text import CountVectorizerįrom sklearn.feature_extraction.text import TfidfTransformer Unfortunately the author didn't have the time for the final section which involved using cosine similarity to actually find the distance between two documents. I was following a tutorial which was available at Part 1 & Part 2.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed